Evaluation - Wikipedia, the free encyclopedia. Evaluation is a systematic determination of a subject's merit, worth and significance, using criteria governed by a set of standards. It can assist an organization, program, project or any other intervention or initiative to assess any aim, realisable concept/proposal, or any alternative, to help in decision- making; or to ascertain the degree of achievement or value in regard to the aim and objectives and results of any such action that has been completed. It is long term and done at the end of a period of time. Contents. 1Definition. Standards. 3Perspectives of Evaluation. Approaches. 4. 1. Classification of approaches. Summary of approaches. Pseudo- evaluation. Objectivist, elite, quasi- evaluation. Objectivist, mass, quasi- evaluation. Objectivist, elite, true evaluation. Objectivist, mass, true evaluation. Subjectivist, elite, true evaluation. Subjectivist, mass, true evaluation. King, Anthony Sims, & David Osher. The goal of this section of our website is to provide a brief conceptual background for cultural competence, and to illustrate the elements of cultural competence in. The updated competency assessments were revised to reflect the 2014 core competencies and allow public health practitioners to explore their skill levels. SAMPLE POLICY & PROCEDURE DRAFT 10/16/06 Legal Issues: PICC Line and Midline Program Outline: 1. State regulations regarding PICC Line or Midline placement 2. Nursing qualifications to place a PICC Line or Midline 3. Cultural Competency Assessment, Developed by Kelly Bjoralt, OTS, and Kristy Henson, OTS, 2008. Assessment of Organizational Cultural Competence. This brochure provides a definition of cultural competency and health care. The Office of Minority Health (OMH) advises the Secretary and the OPHS on public health issues affecting American Indians, and Alaska Natives, Asian Americans, Native Hawaiians and other Pacific Islanders, Blacks/African. Defining competency-based evaluation objectives in family medicine Dimensions of competence and priority topics for assessment. 1.0 Executive Summary 1. The evaluation of the Community Safety Officer (CSO) pilot program, covering activities from July 2008 to December 2009, was conducted by the RCMP National Program Evaluation Services. Evaluation is a systematic determination of a subject's merit, worth and significance, using criteria governed by a set of standards. It can assist an organization, program, project or any other intervention or initiative to. Outlines the Overview of the APS Competency Model, Understanding the Behavioural Scale and the Behavioural Scale in the APS Competency Model. Client- centered. Methods and techniques. See also. 7References. External links. Definition. It looks at original objectives, and at what is either predicted or what was accomplished and how it was accomplished. 907 KAR 1 :450 INCORPORATION BY REFERENCE MARCH 2005 EDITION MEDICAID SERVICES MANUAL FOR NURSE AIDE TRAINING AND COMPETENCY EVALUATION PROGRAM KENTUCKY MEDICAID PROGRAM Cabinet for Health and Family Services Department for. So evaluation can be formative, that is taking place during the development of a concept or proposal, project or organization, with the intention of improving the value or effectiveness of the proposal, project, or organisation. It can also be assumptive, drawing lessons from a completed action or project or an organisation at a later point in time or circumstance. Evaluation is inherently a theoretically informed approach (whether explicitly or not), and consequently any particular definition of evaluation would have be tailored to its context . Having said this, evaluation has been defined as: A systematic, rigorous, and meticulous application of scientific methods to assess the design, implementation, improvement, or outcomes of a program. It is a resource- intensive process, frequently requiring resources, such as, evaluate expertise, labor, time, and a sizable budget. The core of the problem is thus about defining what is of value. The central reason for the poor utilization of evaluations is arguably. Evaluators may encounter complex, culturally specific systems resistant to external evaluation. Furthermore, the project organization or other stakeholders may be invested in a particular evaluation outcome. Finally, evaluators themselves may encounter . The Joint Committee on Standards for Educational Evaluation has developed standards for program, personnel, and student evaluation. The Joint Committee standards are broken into four sections: Utility, Feasibility, Propriety, and Accuracy. Various European institutions have also prepared their own standards, more or less related to those produced by the Joint Committee. They provide guidelines about basing value judgments on systematic inquiry, evaluator competence and integrity, respect for people, and regard for the general and public welfare. The principles run as follows: Systematic Inquiry: evaluators conduct systematic, data- based inquiries about whatever is being evaluated. This requires quality data collection, including a defensible choice of indicators, which lends credibility to findings. This also pertains to the choice of methodology employed, such that it is consistent with the aims of the evaluation and provides dependable data. Furthermore, utility of findings is critical such that the information obtained by evaluation is comprehensive and timely, and thus serves to provide maximal benefit and use to stakeholders. This requires that evaluation teams comprise an appropriate combination of competencies, such that varied and appropriate expertise is available for the evaluation process, and that evaluators work within their scope of capability. A key element of this principle is freedom from bias in evaluation and this is underscored by three principles: impartiality, independence, and transparency. Independence is attained through ensuring independence of judgment is upheld such that evaluation conclusions are not influenced or pressured by another party, and avoidance of conflict of interest, such that the evaluator does not have a stake in a particular conclusion. Conflict of interest is at issue particularly where funding of evaluations is provided by particular bodies with a stake in conclusions of the evaluation, and this is seen as potentially compromising the independence of the evaluator. Whilst it is acknowledged that evaluators may be familiar with agencies or projects that they are required to evaluate, independence requires that they not have been involved in the planning or implementation of the project. A declaration of interest should be made where any benefits or association with project are stated. Independence of judgment is required to be maintained against any pressures brought to bear on evaluators, for example, by project funders wishing to modify evaluations such that the project appears more effective than. This requires taking due input from all stakeholders involved and findings presented without bias and with a transparent, proportionate, and persuasive link between findings and recommendations. Thus evaluators are required to delimit their findings to evidence. A mechanism to ensure impartiality is external and internal review. Such review is required of significant (determined in terms of cost or sensitivity) evaluations. The review is based on quality of work and the degree to which a demonstrable link is provided between findings. Access to the evaluation document should be facilitated through findings being easily readable, with clear explanations of evaluation methodologies, approaches, sources of information, and costs. Examples of how such respect is demonstrated is through respecting local customs e. Access to evaluation documents by the wider public should be facilitated such that discussion and feedback is enabled. The various funds, programmes, and agencies of the United Nations has a mix of independent, semi- independent and self- evaluation functions, which have organized themselves as a system- wide UN Evaluation Group (UNEG). There is also an evaluation group within the OECD- DAC, which endeavors to improve development evaluation standards. Michael Quinn Patton motivated the concept that the evaluation procedure should be directed towards: Activities. Characteristics. Outcomes. The making of judgments on a program. Improving its effectiveness,Informed programming decisions. Founded on another perspective of evaluation by Thomson and Hoffman in 2. This would include it lacking a consistent routine; or the concerned parties unable to reach an agreement regarding the purpose of the program. In addition, an influencer, or manager, refusing to incorporate relevant, important central issues within the evaluation. Approaches. Many of the evaluation approaches in use today make truly unique contributions to solving important problems, while others refine existing approaches in some way. Classification of approaches. Important principles of this ideology include freedom of choice, the uniqueness of the individual and empirical inquiry grounded in objectivity. He also contends that they are all based on subjectivist ethics, in which ethical conduct is based on the subjective or intuitive experience of an individual or group. One form of subjectivist ethics is utilitarian, in which . Another form of subjectivist ethics is intuitionist/pluralist, in which no single interpretation of . The objectivist epistemology is associated with the utilitarian ethic; in general, it is used to acquire knowledge that can be externally verified (intersubjective agreement) through publicly exposed methods and data. The subjectivist epistemology is associated with the intuitionist/pluralist ethic and is used to acquire new knowledge based on existing personal knowledge, as well as experiences that are (explicit) or are not (tacit) available for public inspection. House then divides each epistemological approach into two main political perspectives. Firstly, approaches can take an elite perspective, focusing on the interests of managers and professionals; or they also can take a mass perspective, focusing on consumers and participatory approaches. Stufflebeam and Webster place approaches into one of three groups, according to their orientation toward the role of values and ethical consideration. The political orientation promotes a positive or negative view of an object regardless of what its value actually is and might be. The questions orientation includes approaches that might or might not provide answers specifically related to the value of an object. The values orientation includes approaches primarily intended to determine the value of an object. They are based on an objectivist epistemology from an elite perspective. Six quasi- evaluation approaches use an objectivist epistemology. Accountability takes a mass perspective. Seven true evaluation approaches are included. Two approaches, decision- oriented and policy studies, are based on an objectivist epistemology from an elite perspective. Consumer- oriented studies are based on an objectivist epistemology from a mass perspective. Finally, adversary and client- centered studies are based on a subjectivist epistemology from a mass perspective. Summary of approaches. The organizer represents the main considerations or cues practitioners use to organize a study. The purpose represents the desired outcome for a study at a very general level. Strengths and weaknesses represent other attributes that should be considered when deciding whether to use the approach for a particular study. The following narrative highlights differences between approaches grouped together. Summary of approaches for conducting evaluations. Approach. Attribute. Organizer. Purpose. Key strengths. Key weaknesses. Politically controlled. Threats. Get, keep or increase influence, power or money. Secure evidence advantageous to the client in a conflict.

0 Comments

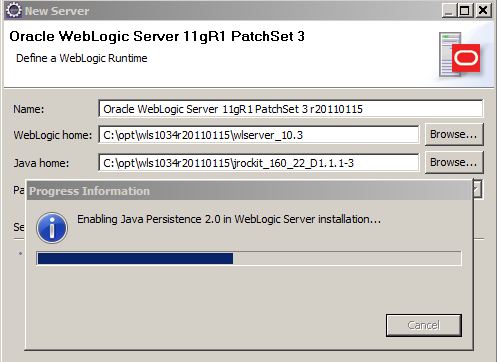

Weblogic 1. 0. 3. Action: Download and apply the upgrade patch. Install Weblogic PSU T5F1 (UPDATE 10.3.6.0.8) Recently I was asked to apply the latest PSU on top of Oracle WebLogic Server (WLS) 11gR1 (10.3.6) on RHEL 6. As you know, the patch set updates (PSUs).Steps. 1. Download the upgrade patch from metalink. The patch number is 1. You can also search for the correct patch in metalink using the . EclipseLink/Examples/JPA/WebLogic Web Tutorial. Apply Smart Update Patch - Recommended for 10.3.0.0. Retrieved from 'http://wiki.eclipse.org/index.php?title=EclipseLink/Examples/JPA/WebLogic. Patch WebLogic 10.3.6 to 12.2.1.0.0 By Juergenkress-Oracle on Feb 12, 2016. This document defines minimum releases and patches for the Oracle WebLogic Server component of Oracle Fusion Middleware to address the. G WebLogic Server Rolling Upgrade. I've been applying patches individually as they become available rather than waiting for the cumulative patch updates to become available. More discussions in WebLogic Server - Upgrade / Install / Environment / Migration. Oracle WebLogic Server 11g Release 1 (10.3.5). Oracle WebLogic Server 11g Release 1 (10.3.5) Patch Set 4 seems to be out! There is even still an old Coherence 3.6 version bundled with the download. Applying Patch 18040640 - SU Patch

Unzip the downloaded file. The resultant file would be wls. Backup MW folder and Inventory directory. Shutdown all the WLS services and backup the directories. Always good to have the backup in place before a major upgrade. Invoke the upgrade installer and follow the intrauctions in the installerjava - d. All the screens are self explanatory. Click complete. Files will now be upgraded to 1. Maintenance level. Startup the Weblogic Admin server. The startup log will show the latest maintenance level of 1.  |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2016

Categories |

RSS Feed

RSS Feed